Data Structures and Algorithms Week3: Associative Arrays and Hash tables

Associative Arrays

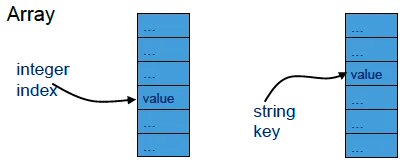

Array

- An array maps an index (usually an integer) to an element, of any type.

- Arrays in Java have indexes starting at 0.

- Access by indexing

a[i] /* 0 <= i < size */- An array is a function from natural numbers to elements all of the same type.

So can we use an array every time we want to connect numbers to values?

Actual example: exam scoring

- Class of about 300 students

- Student numbers 5 digits

- Students usually marked once, or sometimes twice (borderline).

- Program to check that no student marked more than twice

static final int range = 100000; // 5-digit numbersint [ ] timesMarked= new int [range];for (int i= 0; i != range ; i++) timesMarked[i] = 0;…timesMarked[studentNum]= timesMarked[studentNum] + 1;Very sparsely used array!

0.3% of array is used! Very space-inefficient.

We need to map a key to a value

- Example:

link (‘map’) key (student number) to value (student information). - This is called a mapping.

- At most one value for any key.

- Also called a function, from key to value.

Example: email-address lookup

“Sharon” → “sharoncurtis@brookes.ac.uk"

"David” → “dlightfoot@brookes.ac.uk"

"Ian” → “ibayley@brookes.ac.uk”

Pronounce ”→” as “maps to”

Associative array

- ADT(abstract data type)

- An associative array is an abstract data type

- it is composed of a collection of unique keys and a collection of values, where each key is associated with one value (or set of values).

- The operation of finding the value associated with a key is called a lookup or indexing, and this is the most important operation supported by an associative array.

- The relationship between a key and its value is sometimes called a mapping or binding. For example, if the value associated with the key “bob” is 7, we say that our array

maps“bob” to 7. - Associative arrays are very closely related to the mathematical concept of a function with a finite domain.

Possible example: sequence of entries

public class DelegateSeq implements IDelegateDB { private Delegate[] table; private final static int tableSize = 10; // or whatever private int numEntries; public DelegateSeq() { System.out.println("Simple sequence"); table = new Delegate[tableSize]; clearDB(); } public void clearDB() { numEntries = 0; }}Sequence of entries : put

public Delegate put(Delegate delegate) { assert numEntries != tableSize; // very simple assert delegate != null; String name = delegate.getName(); assert name != null && !name.equals(""); Delegate previous; int pos = findPos(name); // we have to check not already in table. Time efficiency of findPos? (next slide) if (pos == numEntries) { // new numEntries++; previous = null; } else { previous = table[pos]; } table[pos] = delegate; return previous;}Sequence of entries: findPos

private int findPos(String name) { assert name != null && !name.equals(""); int i = 0; while (i != numEntries && !table[i].getName().equals(name)) i++; return i;}time complexity of findPos?

Simple linear search: O(n)

So put (and also get) have linear time complexity.

Simple sequence gives O(n) behaviour

- Can be improved by using an ordered sequence.

- Then we can use a binary search.

Ordered sequence: findPos

private int findPos(String name) { // returns position where name is, or would go assert name != null && !name.equals(""); int left = 0, right = numEntries; while (left != right) { int mid = (left + right) / 2; if (name.compareTo(table[mid].getName()) > 0) left = mid + 1; else right = mid; } return left; // or right, since left == right}This is a binary search, so O(log n) – much better than unordered

Ordered sequence: put

public Delegate put(Delegate delegate) { // assertions as before Delegate previous; int pos = findPos(name); if (pos == numEntries || !name.equals(table[pos].getName())) { // new int i = numEntries; while (i != pos) { // ‘budging up’ to keep the table ordered table[i] = table[i-1]; i--; } numEntries++; previous = null; } else { previous = table[pos]; } table[pos] = delegate; return previous;}But the ‘budging up’ is O(n), so not much improvement.

Ordered not much improvement

- Self indexing can be O(1) – constant time. But works only in very special cases.

- But leads to very sparse use of space in most cases.

- But leads to very sparse use of space in most cases.

- Using a sequence works OK, but has O(n) time complexity.

- Ordered sequence is better: put is O(n), but get is O(log n) because of the binary search.

- But we want O(1) in all cases.

Answer: Hash tables

Hans Peter Luhn IBM, 1954

Very clever idea!

We will see how they are made available in the Java Base-Class Library (API) and also how they work.

Hans Peter Luhn

Hans Peter Luhn

Java API HashMap : Method Summary

void clear()Removes all mappings from this map.boolean containsKey(K key)Returns true if this map contains a mapping for the specified key.boolean containsValue(V value)Returns true if this map maps one or more keys to the specified value.V get(K key)Returns the value to which the specified key is mapped in this identity hash map, or null if the map contains no mapping for this key.boolean isEmpty()Returns true if this map contains no key-value mappings.Set<K> keySet()Returns a set view of the keys contained in this map.V put(K key, V value)Associates the specified value with the specified key in this map.

(Returns the old discarded value – same with remove below)V remove(K key)Removes the mapping for this key from this map if present.int size()Returns the number of key-value mappings in this map.Collection<V> values()Returns a collection view of the values contained in this map.

Hash tables

retrieve 检索

modulo 取模

quadratic probing 平方探查

load factor 负载因子

Hash tables (associative arrays)

- Content topics:

- Hashing

- Hash functions

- Collision resolution

- Probing (open addressing)

- Chaining

Typical scenario

- Fast storage and searching of large tables, lists, databases etc

- Need to minimize processing operations (comparisons and index calculations) per search

- Numerous application areas for fast table-lookup

- For example:

databases, dictionaries, compiler symbol table, search engines, password lookup …

What’s the problem

- How to store and then be able to locate items from a large data set very quickly

- The problem is whatever search methods we use, the following fact will not change:

The more data we have, the slower the access

What’s the problem - realistic demand

- The more data we have, the slower the access

- For example:

- Suppose a compiler allows 6-character symbol names that start with an upper-case letter (A-Z) followed by 5 alpha-numeric (0-9, A-Z)

- How many possible symbol names could there possibly be?

- Answer: or approximately

- It would not be sensible to allocate this amount () of memory and use fewer than 10,000 elements (an amount of elements that a program usually handles)

A 16-digit bank card

A 16-digit bank card

- For example:

- How many possible accounts

- World population

- Proportion of accounts we may use

-

Too wasteful

No necessary

- How many possible accounts

What’s the problem- efficiency

- Linear search

- With N items on average, we need to trawl through N/2 of them to find the one we want. So a linear search is O(N)

- With twice the number of data items, the search time doubles

- Binary Search

- This is the technique we use to find an entry in a telephone directory in which the entries are sorted. The complexity of the binary search is O(logN)

Search-Algorithm Performance

- Linear search is O(N) – is the worst-possible case, linear time

- Binary search is O(log N) – is better, logarithmic time, but requires data to be sorted

- What we desire is O(1) or O(N0)

- i.e. search time is constant, independent of data size N

- so we can reach any data in a fixed number of operations, i.e. constant time

’Associative’ array

- Suitable data structures for associative array:

- Binary search tree [O(logn)]

- Hash table [O(1)]

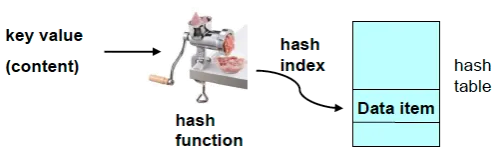

‘Hashing’

- ‘Messing things up’, for example:

- Corned-beef hash

// 玉米牛肉糜 - Hash brown potatoes

// 薯饼

- Corned-beef hash

- Hence the meat-mincing machine in next slide…

- Hashing for CS:

A method of transforming a search key into an address, for the purpose of fast and efficient storage and retrieval of data items.

Example: finding someone quickly

|  |  |  |

|---|

How does hashing work

- Instead of storing each new data item in the next free memory location, we need to find a way to find its storage location based on a key value from the data content.

- That way we will be able to locate each data item, using its key value.

- Hashing:

- The process of build a relationship between constant numbers (such as 1, 2, 3…) and particular content.

- So the location to store/find a data item is obtained using a hash function applied to the data item (key) value

- The hash function maps a key to an arbitrary (but not ‘random’) integer ‘bucket number’

What is a hash function

- Hash function(method):

- The way/method to convey the particular contents into constant numbers.

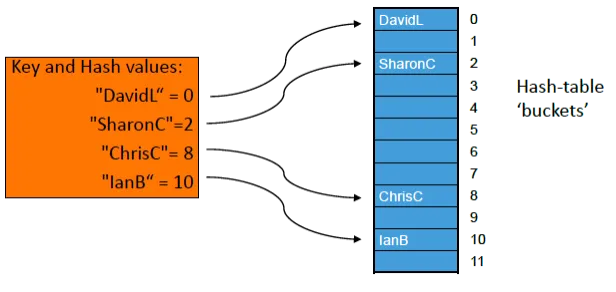

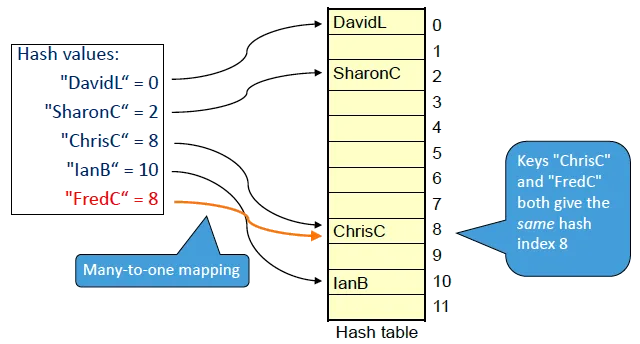

A hashing example

- Lets’ store some names of a few people in a table of size 12, so index 0 to 11 in Java

- The key value for each person is simply the name string, e.g. “

ChrisC” - The hash function is obtained from the sum of the ASCII codes of the characters in each string.

| A | B | C | D | E | F | G | H | I | J | K | L | M |

| 65 | 66 | 67 | 68 | 69 | 70 | 71 | 72 | 73 | 74 | 75 | 76 | 77 |

| N | O | P | Q | R | S | T | U | V | W | X | Y | Z |

| 78 | 79 | 80 | 81 | 82 | 83 | 84 | 85 | 86 | 87 | 88 | 89 | 90 |

| a | b | c | d | e | f | g | h | i | j | k | l | m |

| 97 | 98 | 99 | 100 | 101 | 102 | 103 | 104 | 105 | 106 | 107 | 108 | 109 |

| n | o | p | q | r | s | t | u | v | w | x | y | z |

| 110 | 111 | 112 | 113 | 114 | 115 | 116 | 117 | 118 | 119 | 120 | 121 | 122 |

| C | h | r | i | s | C |

|---|---|---|---|---|---|

| 67 | 104 | 114 | 105 | 115 | 67 |

- To obtain an index in the range 0 to 11 calculate: character code sum, modulo 12

- So, hash = (sum of ASCII codes for each character in string) modulo 12 (% operator)

- Range of hash index is 0 to 11

Hash value for “ChrisC”

Sum of character codes: 67 + 104 + 114 + 105 + 115 + 67 = 572

572 mod 12 is 8

So hash value is 8

Similarly:

- “ChrisC” → 8

- “SharonC“→ 2

- “DavidL“→ 0

- “IanB“→ 10

Simple Hashing

Too-simple hash function

int hashFirstChar(String s) { // just value of first character return s.charAt(0) % tableSize; // mod tableSize gives a value in range 0 .. tableSize-1}(Can do the % tableSize later instead)

Modify simple hash function-v1

final int tableSize = 12; // for exampleprivate static int hashSum(String key) { int hashValue = 0; for(int j = 0; j != key.length(); j++) hashValue = hashValue + (int)key.charAt(j); return hashValue; // or hashValue % tableSize here or later}Modify simple hash function-v2

private static int hashWeighted(String key) { int hashValue = 0; for(int j = 0; j != key.length(); j++) hashValue = j * hashValue + (int)key.charAt(j); // "j *" here is weighting return hashValue;}hashWeighted(“marcel”) = 34153

hashWeighted (“carmel”) = 33153

This hash function still has problem

Danger! Overflow ahead!

- hashWeighted(“Brzeczyszczykiewicz”)

= 1,876,978,831,955,724,338 - But biggest integer in 32 bits,

Integer.MAX_VALUE

= 2,147,483,647 Numeric overflow! Can crash program.

Possible solution avoiding overflow

private static int hashWeighted(String key) { int hashValue = 0; for(int j = 0; j != key.length(); j++) hashValue = (j * hashValue + (int)key.charAt(j)) % tableSize; return hashValue;}Some examples of hashing functions

- Division:

h(k) is the remainder (modulus) of key k after division by the size of the table, s.

h(k) = k % s; if k = 7155, s = 97 then h(k) = 74 - Truncation:

Take a few of the first or last characters of the key as the hash code. Can work well, provided the characters are evenly distributed.

- Mid-square:

the key is squared and the middle digits of the result are used as the hashed value.

k = 7155; k2 = 51194025; h(k) = 94 (from 511 94 025) - Folding:

the key is partitioned into several parts and the sum of the parts is used to produce the hash code

k = 7155; 71 + 55 → 126 or 17 + 55 → 72 - ……

Practice: calculate your name hash value

- Store some names of a group students, so table size should be 26, the key value for each one is simply the name string, e.g. “

Tony” - The hash function is Division:

- k is the sum of the ASCII codes of the letters in the name string;

- h(k) is the remainder (modulus) of key k after division by the size of the table, s.

h(k) = k % s; s = 26

| A | B | C | D | E | F | G | H | I | J | K | L | M |

| 65 | 66 | 67 | 68 | 69 | 70 | 71 | 72 | 73 | 74 | 75 | 76 | 77 |

| N | O | P | Q | R | S | T | U | V | W | X | Y | Z |

| 78 | 79 | 80 | 81 | 82 | 83 | 84 | 85 | 86 | 87 | 88 | 89 | 90 |

| a | b | c | d | e | f | g | h | i | j | k | l | m |

| 97 | 98 | 99 | 100 | 101 | 102 | 103 | 104 | 105 | 106 | 107 | 108 | 109 |

| n | o | p | q | r | s | t | u | v | w | x | y | z |

| 110 | 111 | 112 | 113 | 114 | 115 | 116 | 117 | 118 | 119 | 120 | 121 | 122 |

Practice

- Design your own hash method

- to calculate the hash value for your name string

- should be different from the example method mentioned above

- try to use easy way

The most handsome boy/most beautiful girl demonstrate

Simple Hashing – with Hash Clash

Search(Retrieval)

- Use the same hashing function and collision-resolution method as for inserting.

- At best can get O(1) – constant time.

Can’t do better than that!

Perfect hash function

A perfect hash function gives no collisions:

For all x and y:

- Possible if you know the data.

- Collision is when two or more keys have the same hash value.

- Rarely possible, but for example in a compiler,

- determine whether identifier is a reserved word.

- We know all the reserved words, so can construct a perfect hash function.

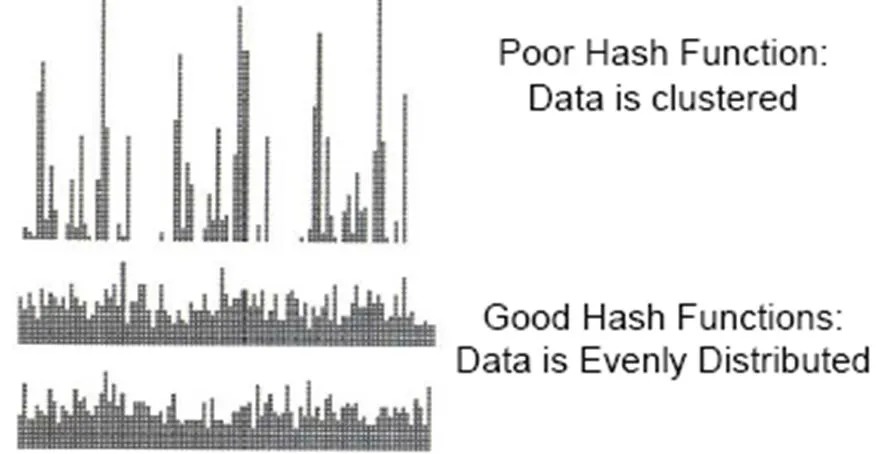

Desirable characteristics of a hashing function

- Quick to calculate

- Gives good spread – different keys map to different bucket/slot numbers (positions in the table)

- A perfect hashing function maps every key to a different bucket/slot number

- but in practice, sometimes get a ‘clash’ 💣 – more than one key is mapped to the same bucket number.

More about hash functions

- The hash function can potentially generate any hash table index, 0..TableSize-1 so we often use the modulo (%) operator to restrict the hash function range

- A hash function directs us to a single storage location for a particular data key value

- If we could guarantee that each possible key value maps on to a unique entry (1:1 mapping) then our hashing would be perfect.

- Because the hash table in practice is limited in size, and the difficulty of ensuring one-to-one mapping, we need to allow for collisions.

Try to reduce collisions!

Impossible to eliminate!

One Java built-in hashCode() method

Beware numeric overflow! Use % early.

public class Hash10 { public static void main(String[] args) { System.out.println("hashCode(\"A\")="+"A".hashCode()); System.out.println("hashCode(\"AB\")="+"AB".hashCode()); System.out.println("hashCode(\"ABC\")="+"ABC".hashCode()); System.out.println("hashCode(\"ABCD\")="+"ABCD".hashCode()); }}result:

hashCode("A")=65hashCode("AB")=2081hashCode("ABC")=64578hashCode("ABCD")=2001986Probability of hash clashes

- What is the probability that 2 (or more) people in a group of n share a birthday month?

Assume that birthdays are equally and randomly distributed over 12 months. Q(n) = probability that n people have distinct birthday months

Q(2) = 11/12 = 0.9166

Q(3) = 11/12 x 10/12 = 0.7639

Q(4) = 11/12 x 10/12 x 9/12 = 0.5729

etc

P(n) = 1-Q(n) = probability that 2 or more people of the n share a birthday month, so

P(2) = 0.0834

P(3) = 0.236

P(4) = 0.427

P(5) = 0.618

P(6) = 0.777

etc.

5 people > 50%

Probabilities of clashes in Hash Table

- A surprising example:

- Create a hash table with Table_Size=1,000,000 entries (index 0..Table_Size-1)

- Hash function creates an arbitrary index number (based on the key value) that is uniformly distributed in the range 0..Table_Size-1

- Keep adding hash entries to the table

- The probability that a collision will occur before 2,500 entries are made is 95%, i.e. when the table is only 0.25% full

- Conclusion: clashes can occur more frequently than we might intuitively expect.

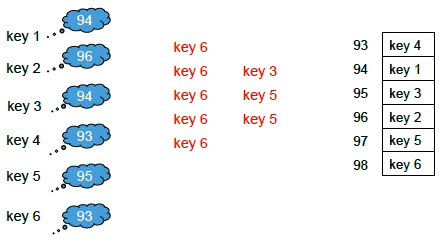

Collision Resolution – Linear probing

- Hash collisions can be resolved by probing to find an alternative location.

- If a collision occurs [hash(key1)=hash(key2)] look for next table entry that is free

- First calculate:

Index1 = hash(Key)(which cell is not available)hash(key)maps to range 0 ..Table_Size-1

- Next calculate

Index2 =(Index1 + probe) % Table_Size

- repeating with probe = 1,2,3,.. until a vacant entry is found (probe function)

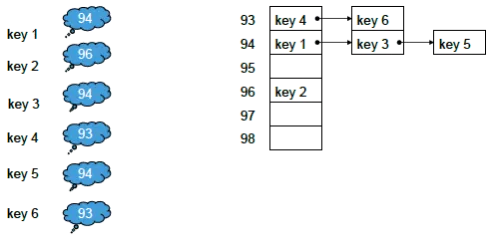

primary clustering has occurred for keys that hash to index 93 and 94

primary clustering has occurred for keys that hash to index 93 and 94

- Hash collisions can be resolved by probing to find an alternative location.

- Advantages:

- resolves hash clash

- simple algorithm

- Disadvantages:

- local clustering around the primary key (termed primary clustering, such as key1~key6)

- deletion is tricky (it leaves holes)

- Advantages:

Linear probing - Number of probes

- the data is stored within the hash table (also called ‘closed hashing’).

- We want to increase efficiency for probing

= easy to find a vacant cell to store the hash value

= give more space than the storage required

which one is easier to find a vacant cell to store hash code for key1~key6 (collision existed)

Collision resolution: Open addressing

- Open addressing

- The average number of probes for a search depends critically on the hash-table load factor, L

L = number_of_items_in_table / Table_Size often expressed as a percentage

Therefore the maximum capacity is simply the hash table size, and maximum load factor is 1.0.

Linear probing - Number of probes

- The average number of probes for a search depends critically on the hash-table load factor

- Average number of probes calculation is complex to derive

To solve clustering

- Primary clustering

- When keys hash to the same location and are then stored close by – this leads to primary clustering

- Avoid by allowing the probe function to provide a greater spread of keys

- Secondary clustering

- When keys hash to the same index and then follow the same probe sequence (same offset), this leads to secondary clustering

- Avoid with probe offset being non-linear (quadratic) or a function of the key (double hashing)

To solve clustering– Quadratic Probing

- As linear probing but instead of looking for next table entry, we move in jumps of a size involving the square of the probe integer

- First calculate:

Index1 = hash(Key)(which is not available) - Next calculate

Index2 = (Index1 + i²) mod Table_Size

with i=1,2,3, … - So jump on by 1, 4, 9, 16, 25 …

- First calculate:

- Advantages:

- As linear and pseudo-random probing but primary clustering is reduced.

- Also simpler than pseudo-random probing

- Disadvantage:

- (of all probing methods) is a degraded performance when the table starts to become full.

- Can lead to infinite loop since empty cells might be repeatedly skipped over. Need to detect this and avoid it. (OK if load factor < 50%.)

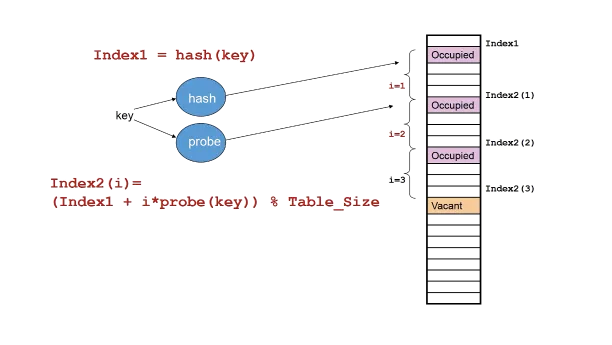

To solve clustering– Double Hashing

- can avoid both primary and secondary clustering.

- uses two hash functions: initial hash, and probe

Index1 = hash(key)

{ideally maps to 0..Table_Size-1} - If Index1 entry is occupied use a probe function to locate a vacant position

Index2 = (Index1 + i * probe(key)) % Table_Size

(noteprobe(key)computed only once) - Probe index i = 1, 2, 3,… until a vacant position is found – but you should limit the number of probes to avoid wrap around to the same index and thus possible infinite loop.

- Can require fewer probes, giving better performance than linear probing

- Ensure probe(key) > 0 , ideally probe(key) maps to 1..Table_Size-1

Linear probing is double hashing when

probe(key) = ? 1

Double hashing

Double hashing:

Double hashing:

hash is the first function

probe is the second function

Open-Addressing Hashing Efficiency

- Average steps of probes

| method | successful searches | unsuccessful searches | ||||

|---|---|---|---|---|---|---|

| load factor | 50% | 80% | 90% | 50% | 80% | 90% |

| linear probing | 1.5 | 3.0 | 5.5 | 2.5 | 13.0 | 50.0 |

| quadratic probing | 1.4 | 2.2 | 2.9 | 2.2 | 5.8 | 11.4 |

| double hashing | 1.4 | 2.0 | 2.6 | 2.0 | 5.0 | 10.0 |

collision resolution: chaining

- Separate chaining

- start a linked list to store the data for each key for that maps to the hash-table location

- each hash table entry can then accommodate a list of key values that share the same hash value

- Since each hash table entry can be a list there is no limit to the hash-table capacity, so the load factor may exceed 1.0.

- Linked lists within the hash table handle collisions

- Alternative to ‘open addressing’, Linked list of all elements whose keys have same hash index.

collision resolution: chaining example

import java.util.LinkedList;import java.util.ListIterator;public class Hash11 { public static void main(String[] args) { final int HTS = 12; // hash table size LinkedList[] staff=new LinkedList[HTS]; for(int i=0; i<HTS; i++) staff[i]=new LinkedList(); addStaff(staff,"Sharon"); addStaff(staff,"Chris"); addStaff(staff,"Ian"); addStaff(staff,"David"); addStaff(staff,"Peter"); addStaff(staff,"Muhammad"); addStaff(staff,"Arantza"); addStaff(staff,"Ken"); addStaff(staff,"Richard"); addStaff(staff,"Hong"); addStaff(staff,"William"); addStaff(staff,"Mark"); addStaff(staff,"Bob"); addStaff(staff,"Clare"); addStaff(staff,"Faye"); ListIterator iterator=staff[0].listIterator(); for(int i=0; i<HTS; i++) { iterator=staff[i].listIterator(); System.out.print("staff["+i+"]: "); while (iterator.hasNext()) System.out.print(iterator.next()+" "); System.out.println(); } } private static void addStaff(LinkedList[] staff, String key) { final int HTS = 12; // hash table size staff[hash(key)].addLast(key); } private static int hash(String key) { final int HTS = 12; // hash table size return Math.abs(key.hashCode() % HTS); }}run:

staff[0]: Hongstaff[1]: Richardstaff[2]:staff[3]: Sharonstaff[4]: Muhammad Kenstaff[5]: Arantza William Mark Bobstaff[6]:staff[7]: Chris Clarestaff[8]: David Peterstaff[9]:staff[10]: Ianstaff[11]: FayeBUILD SUCCESSFUL (total time: 0 seconds)Hashing with Chaining

- Average number of probes

- For the number of probes (or comparisons), first determine the average chain length for cells having a chain

- In the illustration the average chain length is

- For an unsuccessful search the complete chain is inspected. The number of comparisons is this average chain length, so is 2

- For a successful search on average the search travels midway along the chain. For chain length k, the mid length is , so with an average chain length of 2 the mid length is

Linear Probing vs Chaining

| Linear Probing | Chaining |

|---|---|

| - Can require less memory (best for small data records) - Best when load factor is low - No pointers or memory allocation, so safe operation - Insertion can be quicker, without memory allocation - Memory references more localized | - Simple to implement - Less sensitive to clustering, but need to monitor chain lengths because searching in a chain is linear, O(n) - Better for high load-factor situations as hash table linked lists do not fill up - Better for large data records |

Hashing Performance

- Best Case (ideal) O(1)

- Worst case (all keys hash to same slot) O(n)

- Performance very dependent on hash table Load Factor

Load Factor = number of items in table / table size - Likelihood of collision strongly related to load factor

- Load Factor (L) = 0 represents empty table

- Open Addressing: 0 ≤ L ≤ 1, (hash table can only fill to capacity)

- Chaining: 0 ≤ L, (so L can exceed 1)

- Typically performance becomes poor when table > 80% full

- Rebuild with a bigger table to improve performance if table becomes full

Disadvantages of hash tables

- The size of the table is fixed and must be estimated in advance. It cannot be changed at runtime.

- Table size should be about 10% larger than the maximum expected number of entries.

- Hashing algorithms are designed for efficient insertion and retrieval. Deletions are cumbersome and should be avoided. (Don’t just replace entry by null – “example: Where’s Wally”)

Use ‘lazy deletion’; don’t really remove – just mark entry as inactive. - Contents not stored in order, so sorting needed.

- The space needed for pointers for chaining could be used for a larger table.

Summary

- Access time is not dependent on the size of the table, at best O(1)

- Good for storing data not directly representable as array indexes,

or for storing sets of data from very large data sets. - Key values mapped to index by hashing function.

- Different keys mapping to same index means collision.

- Collision resolution:

- ‘open addressing’ using various ‘probing’ algorithms;

- chaining (most efficient, but uses more memory).

- Hashing efficiency depends critically on hash-table load factor and degree of clustering

References

- Robert Sedgewick, Algorithms in Java, Addison-Wesley, Ch 14.

- Mark Allen Weiss, Data Structures and Algorithm Analysis, Addison-Wesley, Ch 5

- Cormen, Leiserson and Rivest, Introduction to Algorithms, Ch 12

- Niklaus Wirth, Algorithms+Data Structures=Programs, Prentice-Hall

- Wikipedia: https://en.wikipedia.org/wiki/Hash_table

Extra: history

- The idea of hashing arose independently in different places.

- In January 1953, H. P. Luhn wrote an internal IBM memorandum that used hashing with chaining.

- Gene Amdahl, Elaine M. McGraw, Nathaniel Rochester, and Arthur Samuel implemented a program using hashing at about the same time. Open addressing with linear probing (relatively prime stepping) is credited to Amdahl, but Ershov (in Russia) had the same idea.

支持与分享

如果这篇文章对你有帮助,欢迎分享给更多人或赞助支持!